OPEN API OPEN WORLDS

InnovAnimatrix

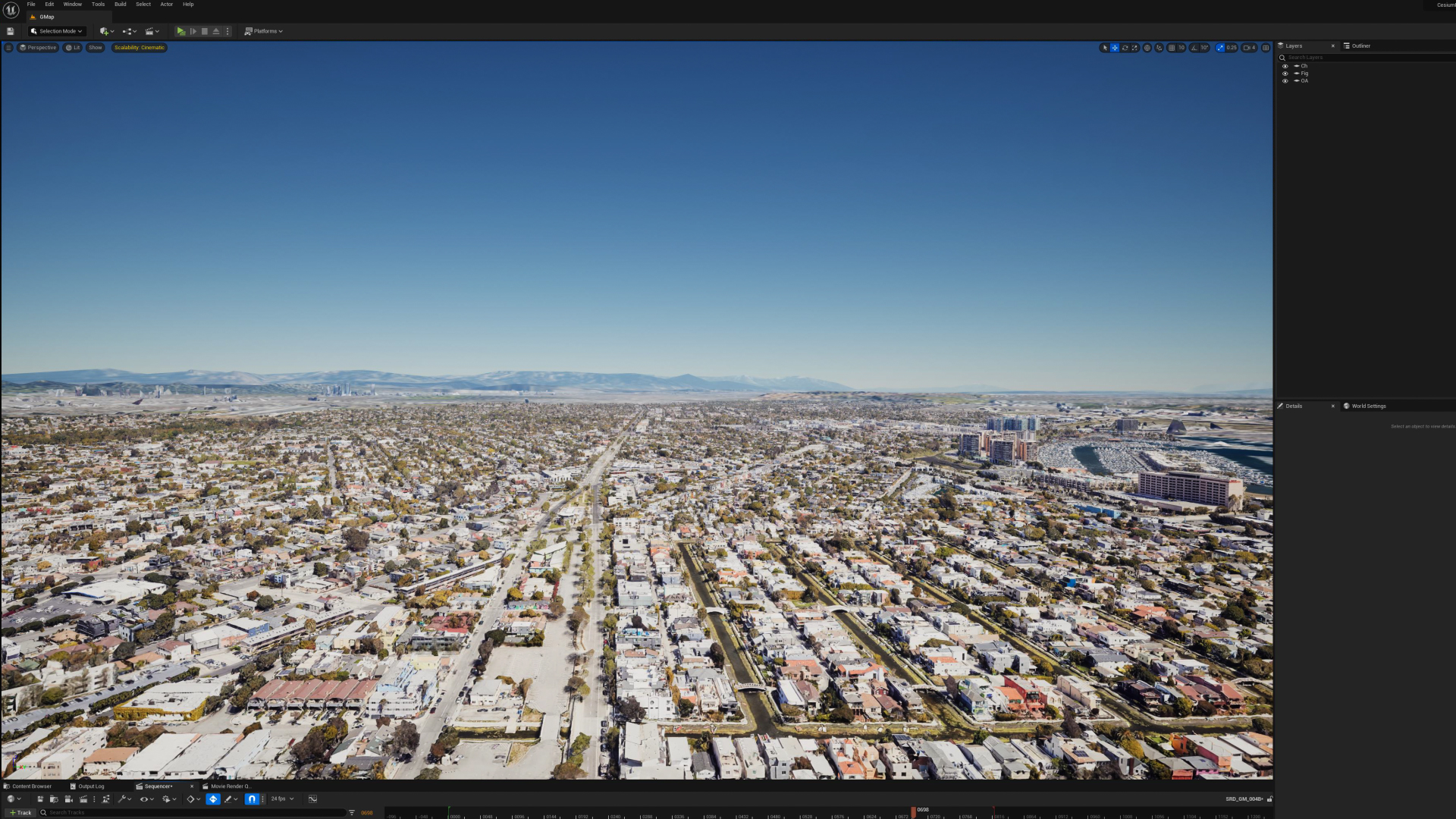

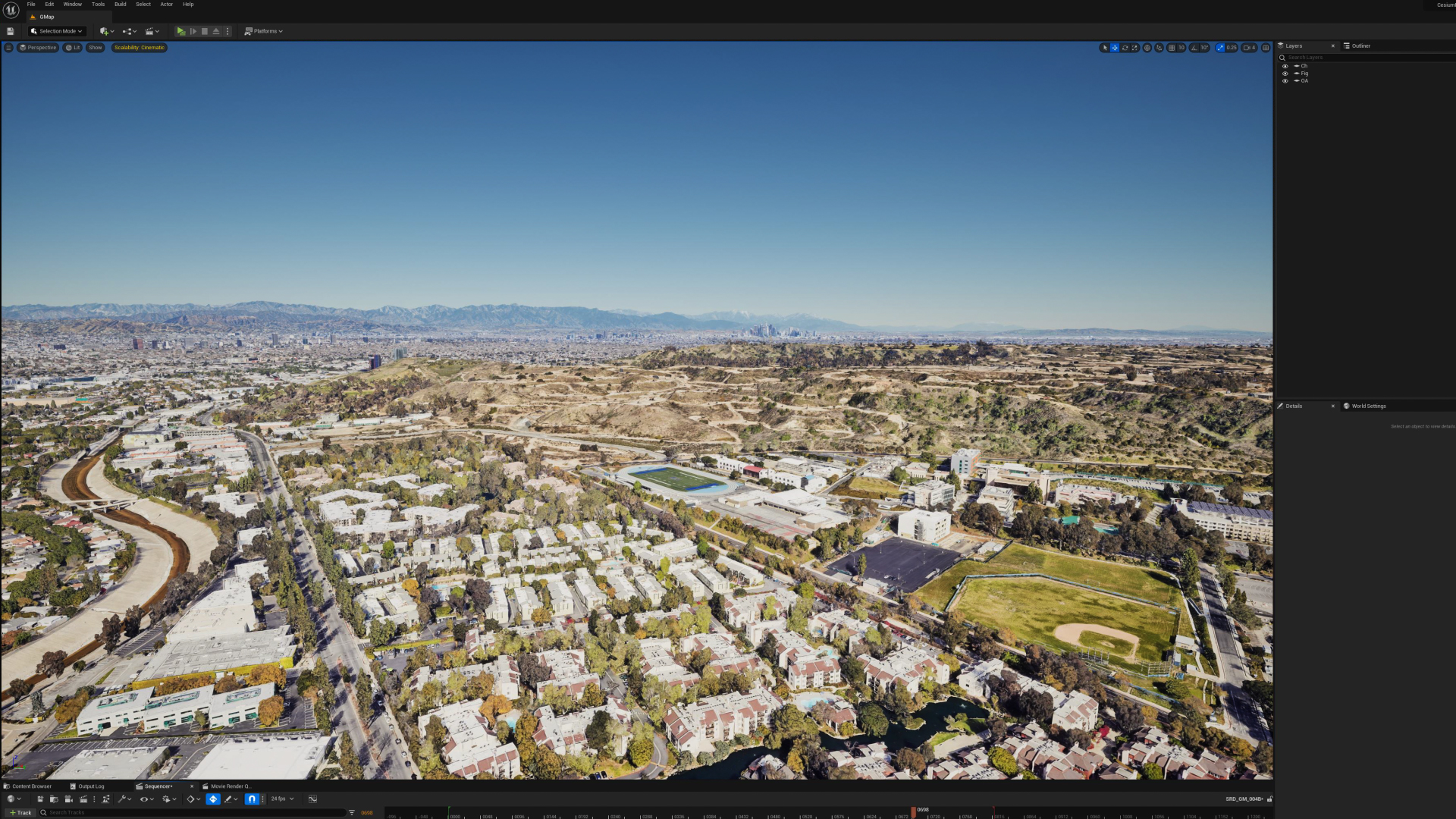

The recent Google I/O heralded a revolutionary announcement: the Map Tiles API was now accessible to developers, ready for integration with Cesium's Unreal plugin. Intrigued by the boundless potential of this transformative technology, I promptly embarked on an exploration of its capabilities. Alongside this, I was given the opportunity to test drive MusicLM from Google AI test kitchen, an innovative tool that generates music from text. Although some editing was required to synchronize the resulting score with my animation, the initial generation of the base music was remarkably swift. I look forward to the continuous improvements this technology promises.

The implementation process was impressively straightforward. Armed merely with an API Key, I was able to directly harness the power of the API in Unreal Engine 5, thereby circumventing the need for Cesium ion.

My past endeavors with Google Earth Studio had their limitations. In stark contrast, importing and manipulating colossal 3D map tiles in Unreal Engine was a refreshing experience, sparking renewed excitement.

Straying from traditional animation techniques, I adopted a novel approach for this project. Conventionally, animations zooming down from space direct the camera vertically downwards. This strategy simplifies the environment creation process and often employs elements like clouds to further restrict the field of view.

However, I chose a different path. I aligned the camera parallel to the surface, capturing a vast swath of the environment within the camera's field of view. The need for clouds or other obscuring elements was negated; all environmental details were instantly available as 3D data. By harnessing the full scope of Earth's 3D data, I simulated a true 'fall off' from space. For a touch of whimsy, I swiftly modeled and rigged Google's 'Pegman' as the guide for this map travel animation, humorously pursuing a Map Marker UFO through the streets of Los Angeles.

![]()

![]()

![]()

![]()

![]()

The outcome was indeed striking. A simple camera movement revealed a panorama of terrestrial scenery, brimming with detail. Although the 3D model quality falls short for close-ups, it proffers more than enough detail for convincing long shots. These resources can effortlessly be employed as backdrops for matte paintings or scenes sans close-ups, making it an immense convenience for any animator.

The potential for combining this technology with NeRF (Neural Radiance Fields) could open up a treasure trove of possibilities, pushing the frontiers of what's attainable in computer graphics. With this exciting future on the horizon, I remain eager and enthusiastic to continue delving into and leveraging these remarkable tools

The implementation process was impressively straightforward. Armed merely with an API Key, I was able to directly harness the power of the API in Unreal Engine 5, thereby circumventing the need for Cesium ion.

My past endeavors with Google Earth Studio had their limitations. In stark contrast, importing and manipulating colossal 3D map tiles in Unreal Engine was a refreshing experience, sparking renewed excitement.

Straying from traditional animation techniques, I adopted a novel approach for this project. Conventionally, animations zooming down from space direct the camera vertically downwards. This strategy simplifies the environment creation process and often employs elements like clouds to further restrict the field of view.

However, I chose a different path. I aligned the camera parallel to the surface, capturing a vast swath of the environment within the camera's field of view. The need for clouds or other obscuring elements was negated; all environmental details were instantly available as 3D data. By harnessing the full scope of Earth's 3D data, I simulated a true 'fall off' from space. For a touch of whimsy, I swiftly modeled and rigged Google's 'Pegman' as the guide for this map travel animation, humorously pursuing a Map Marker UFO through the streets of Los Angeles.

The outcome was indeed striking. A simple camera movement revealed a panorama of terrestrial scenery, brimming with detail. Although the 3D model quality falls short for close-ups, it proffers more than enough detail for convincing long shots. These resources can effortlessly be employed as backdrops for matte paintings or scenes sans close-ups, making it an immense convenience for any animator.

The potential for combining this technology with NeRF (Neural Radiance Fields) could open up a treasure trove of possibilities, pushing the frontiers of what's attainable in computer graphics. With this exciting future on the horizon, I remain eager and enthusiastic to continue delving into and leveraging these remarkable tools

Crafted by Masaki Yokochi